We rarely think about the brain without tools. Not because we would not want to, but because the brain does not lend itself easily to observation without metaphors. And those metaphors are often drawn from the dominant technologies of the time.

We rarely think about the brain without tools. Not because we would not want to, but because the brain does not lend itself easily to observation without metaphors. And those metaphors are often drawn from the dominant technologies of the time.

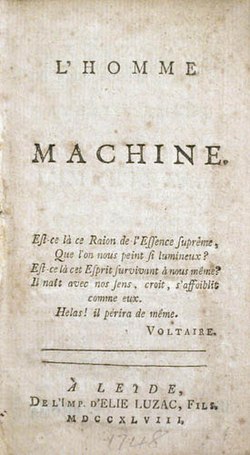

That pattern is very old. Consider the idea of the child as a tabula rasa, an unwritten slate or, more precisely, a smooth wax tablet—later articulated most clearly by Locke and often too readily attributed to Aristotle. Across different periods, the brain has been described as a kind of telephone exchange, as a hydraulic system, as a computer, and as a network and even a box of Lego. Each time, the technology of the moment provided a framework for making something elusive somewhat intelligible. And each time, that framework highlighted certain aspects: what counts as information, what counts as processing, what counts as output.

In the second half of the twentieth century, the computer became the dominant metaphor. Thinking was framed as input–processing–output. Memory became storage. Learning became the efficient encoding and retrieval of information. This metaphor was productive. It led to testable models, better instruments of measurement, and genuine progress in cognitive psychology. But it also had blind spots. Emotion, meaning, context, and motivation fit only awkwardly into such a scheme.

Later, the network moved to the foreground. Connectivism as proposed by Siemens and Downes, distributed knowledge, learning as participation in networks. This, too, was not nonsense. It helped take social and digital dimensions of learning seriously. But once again, something familiar happened: because the network was a powerful description of how knowledge circulates, it sometimes became an explanation of how knowledge comes into being or is accessed. And once again, part of the individual faded from view.

Today, artificial intelligence is the new hype, and more specifically, large language models. Do not be surprised if this becomes our dominant metaphor, even though there is a deep irony in this if one reflects on it a little longer. We speak of layers, representations, and predictions. Of embeddings that grow ever more abstract. Of systems that “understand” language by predicting the next word. And inevitably, we ask: Does our brain work the same way?

Recent research by Goldstein and colleagues shows just how tempting this thought is. Brain signals recorded while people listen to a story turn out to correlate remarkably well, over time, with the internal layers of large language models. Early layers align better with earlier brain activity, while later layers align better with later activity. It is impressive research, methodologically strong, and it reveals something that was previously difficult to observe: a fine-grained picture of how language processing unfolds over time in the brain.

But this is precisely where the metaphor becomes dangerously attractive. It is one thing to observe that two systems display similar structures. It is quite another to conclude that they do the same thing, in the same way, or are even the same.

The fact that language models have a hierarchical representation structure does not imply that the brain processes tokens. The fact that they predict well does not mean that human language processing can be reduced to next-word prediction (even if this mutual prediction works rather well in my relationship, too). What it mainly shows is that these models, precisely because they are so powerful, impose a new grid on our data. They allow us to see patterns that were previously invisible. But they may also determine which patterns we end up looking for in the first place.

That is no reason to distrust such metaphors. It is, however, a reason to keep them in their proper place. Technology is rarely a neutral mirror of reality. It is a pair of glasses. And every pair of glasses sharpens certain contours while blurring others.

What is striking is how this mechanism keeps repeating itself. Each new technology corrects the previous metaphor, but at the same time installs its own set of assumptions. The computer metaphor made internal mental processes discussable again after behaviourism. The network corrected some of the limits of computer-based thinking. And now AI, in turn, is correcting the network. Yet each time, we risk forgetting that this new description is also provisional.

Perhaps that is not a problem. Perhaps we can only understand the brain through temporary metaphors. What does become problematic is when we mistake those metaphors for explanations—when a model is no longer a tool for thinking, but an answer to the question of how thinking “really” works.

History suggests something else. Not that metaphors are wrong, but that they lose their value once we forget that they are metaphors. The brain is not a computer. It was not one in 1985, it was not one in 2005, and it is not one in 2026. But every generation will once again need technology to say something meaningful about it.

The challenge, then, is not to find the one true metaphor and fix it in place forever. The challenge is to retain, with every new metaphor, the discipline to keep seeing its limits. Perhaps the fact that today we like to think in terms of layers, predictions, and embeddings says as much about our technology as it does about our brains.